Financial Autonomy

Most AI services charge you for every task the system performs. We move you to your own hardware, replacing unpredictable monthly bills with a one-time investment that doesn’t increase as you grow.

Stop relying on outside providers for your company’s intelligence. We build and deploy private AI systems on your own servers, giving you total data security, faster performance, and zero monthly usage fees.

Get in Touch

Most enterprises are currently building their future on borrowed ground. Using public APIs creates hidden bottlenecks risking security, margins, and autonomy.

The Scaling Tax

Public AI providers charge you for every word your system processes.

The more you use AI, the more you pay. As your usage grows, the bill grows with it — every month, forever.

Data Privacy

Your proprietary data is sent to third-party servers for processing.

Every question you ask runs on someone else's servers. Your business data, customer details, and internal know-how all leave your network — and you don't fully control where it goes from there.

Zero Control

Your operations depend entirely on another company’s tech.

If they change their prices or go offline, your business stops because you don’t own the underlying engine.

Three things you get when you own your AI instead of renting it.

Most AI services charge you for every task the system performs. We move you to your own hardware, replacing unpredictable monthly bills with a one-time investment that doesn’t increase as you grow.

Standard AI tools require you to send private company data to external servers. Our systems stay behind your own firewall, ensuring your proprietary knowledge and customer data never leave your control.

You decide when your AI updates, how it gets trained, and when it goes live. No surprise pricing changes, no forced upgrades, no waiting on someone else's roadmap.

We pick and tune the right open-source AI models for your business, then host them on dedicated servers you own — replacing a monthly bill that grows with usage with a one-time setup that doesn’t.

We connect your company’s files and data to the AI behind your own firewall, so the AI learns your business without your data ever leaving your network.

We build voice and text channels reserved entirely for your business. You decide the capacity, the speed, and the priority — your AI isn’t sharing GPU time with millions of other users on a public API.

Open-source moves fast. We deploy whatever fits your workload — from current state-of-the-art to whatever ships next quarter — across the models, infrastructure, inference, and voice stacks below.

Open-Source Models

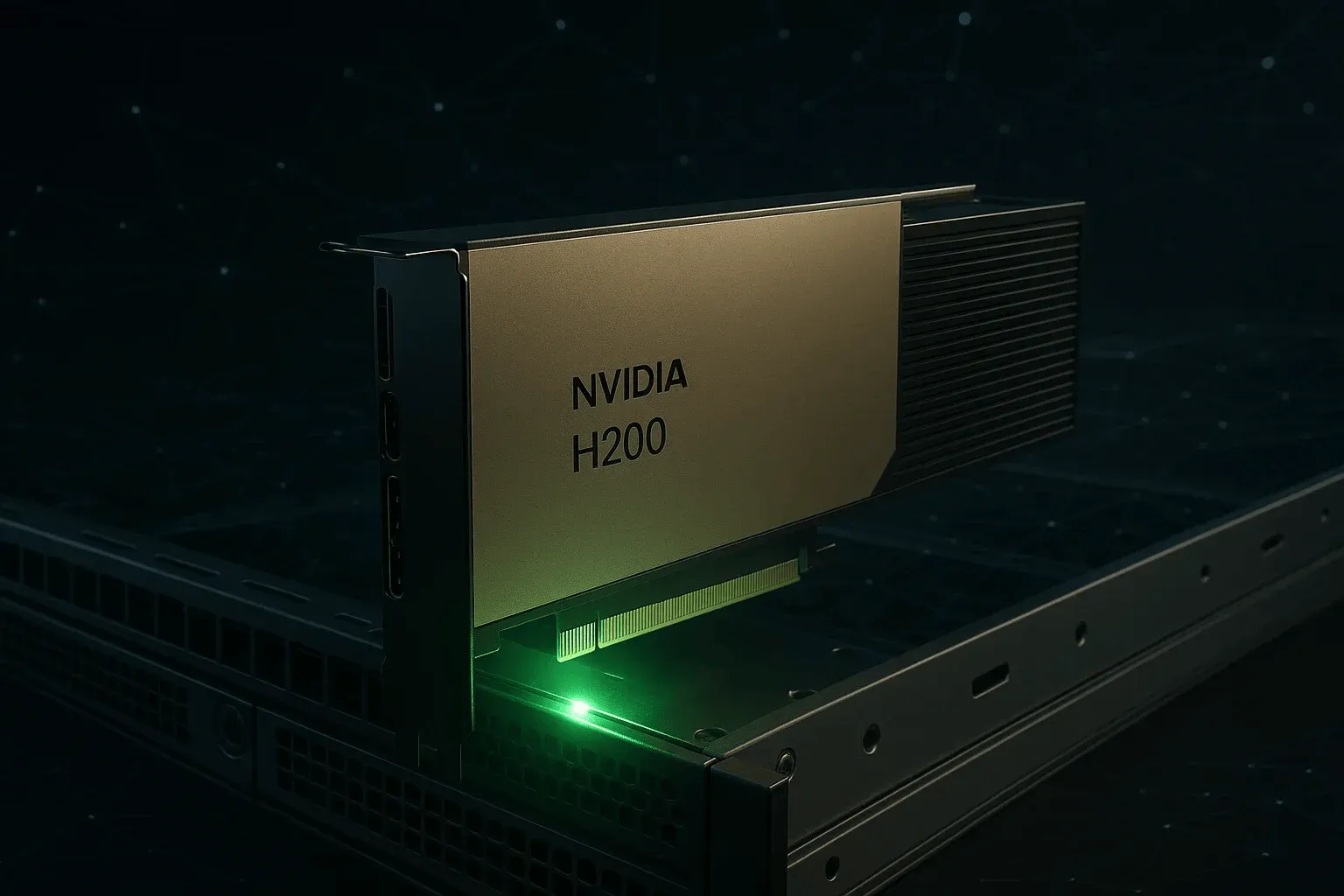

Infrastructure

Inference & Orchestration

Voice & Speech

+ whatever ships next

Answers to the questions teams ask before they move AI inference inside their own infrastructure.

It means the AI models run inside your infrastructure — your AWS account, your private VPC, or on-prem GPUs. No prompts, embeddings, or customer data ever leave your network. You hold the keys, the inference logs, and the model weights.

We deploy whatever fits the workload — Llama, Qwen, Mistral, DeepSeek, and others as the open-source landscape evolves. We benchmark against your real data before recommending a model, so you get speed and accuracy without paying for capability you don't need.

Self-hosted breaks even fast at scale. Public APIs scale linearly with token volume; dedicated infrastructure is a fixed cost. Most clients pass break-even within 3–6 months and then pay 60–80% less per million tokens after that.

No. We handle the full stack — model selection, quantization, GPU provisioning (AWS / GCP / Azure / Lambda / on-prem), inference servers (vLLM, TGI, Ollama), and observability. We hand it off with runbooks your existing platform team can operate.

We deploy the vector store and embedding pipeline inside your environment. Documents, embeddings, and retrieval logs all stay private. Indexing runs on a schedule against your own data sources — file shares, S3, Notion, Confluence, internal databases — without anything leaving your perimeter.

Yes — self-hosted is often the only AI setup that passes compliance review for HIPAA, SOC 2, GDPR, and data-residency requirements. Your data never leaves your environment, audit logs stay under your control, and access policies are yours to define. We've helped teams pass security reviews that disqualified every public API option on the table.

Open-source models improve every quarter. We monitor the landscape, benchmark new releases against your evaluation set, and roll upgrades behind a feature flag. You also get continuous fine-tuning on your latest data so the system gets sharper over time, not staler.

You can. Self-hosted doesn't lock you in — the agents, prompts, and integrations we build sit on top of an abstraction layer that swaps providers cleanly. If you ever decide a public API fits a workload better, you flip a config flag instead of rewriting the system.

One conversation to size the hardware, pick the right open-source models, and chart the move from public APIs to private inference.